Julie Cohen, Nathan Jones, Vivian C. Wong, Alexa Quinn, Steffen Erickson, Hallie Parten, and Marissa Pilger Suhr

Imagine handing a future pilot a stack of articles about flying, asking them to reflect on what they read, and then sending them into the cockpit. It sounds absurd, and yet this is essentially how most teacher preparation programs have approached online learning modules for decades. Pre-service teachers (PSTs) read about classroom skills, respond to reflection prompts, and are expected to translate that knowledge into confident, effective teaching with real children.

Enrollment in traditional preparation programs has declined, and teacher preparation programs are under increasing pressure to lower the financial and time burden on prospective teachers. Online modules have emerged as one practical response. They are flexible, scalable, and can be embedded into existing coursework or offered entirely independently, making them especially attractive to the online-only and alternative certification programs that now dominate parts of the landscape. In theory, a well-designed module can deliver content that teacher educators don't have time to cover in class, reach teachers across geographic and institutional boundaries, and ensure some baseline consistency in what PSTs are exposed to. In practice, however, most of these modules include little to no opportunities to practice classroom skills before working with students

This study addresses that challenge. The researchers asked a simple question: What if online modules actually let teachers practice teaching, not just read about it? This study tests whether an online module that requires PSTs to actually practice a targeted teaching skill in a simulated classroom and receive coaching feedback is more effective than the standard reading-and-reflection approach.

THE PRACTICE-BASED TEACHING MODULE

The practice-based module was built around a three-part framework for teaching a complex professional skill, metacognitive modeling, in which a teacher thinks aloud while working through a math word problem to help students develop their own self-monitoring strategies.

- Representations. PSTs watched video examples of metacognitive modeling in action, both strong examples and non-examples, so they could see what the skill actually looked like before trying it themselves. The module also included vignettes and written examples. The idea is that you can't approximate something you've never seen, so exposure to concrete models of the skill is the necessary starting point.

- Decompositions. The module included a detailed specification of the key components: stating an objective, unpacking the word problem context, demonstrating self-questioning (e.g., "What is this problem asking me to do?"), self-monitoring, and self-regulation. The goal is to make the implicit explicit- to help PSTs understand not just what good metacognitive modeling looks like but why each element matters and what it contributes to student learning.

- Approximations. PSTs actually did the skill twice in mixed-reality simulation sessions using Mursion software. They taught a small group of student avatars controlled by a live actor, practicing metacognitive modeling on actual math word problems (a 2nd-grade task and a 4th-grade task). Each simulation round was sandwiched with coaching: the coach opened with a reflection question, reinforced one effective element the PST had demonstrated, gave a specific next step with a rationale, and then asked the teacher to plan how they'd incorporate that feedback in their next attempt. This cycle of practice, then feedback, then practice again is what the authors call "deliberate practice," and it's the core mechanism the authors credit for the module's effects on real classroom teaching.

STUDY AND METHODS

This study randomly assigned 149 PSTs across two university teacher preparation programs to one of two versions of a two-week online learning module. Both versions covered the same content: how to support students with mathematics disabilities using metacognitive modeling.

The two versions differed in how they taught this content:

- The reading and reflection group (the business-as-usual condition) read practitioner articles about metacognitive modeling and responded to reflection prompts, receiving written feedback on their responses.

- The practice-based group (the treatment condition) engaged with the same content through interactive digital modules and then participated in two rounds of simulated teaching sessions, followed by structured coaching sessions after each round.

Teachers were assessed in two distinct settings:

- The standardized performance tasks asked teachers to record themselves doing a metacognitive think-aloud for a hypothetical class, using a structured online platform with a timer. This was a more controlled, proximal setting, closer to the simulation experience.

- The clinical placement observations were 15–30 minute videos of teachers actually working with real students in their student teaching classrooms, where they were asked to use metacognitive modeling while helping students unpack word problems. This is a much harder test because real classrooms introduce complexity, such as student behavior, lesson pacing, and unexpected questions that a simulation can't replicate.

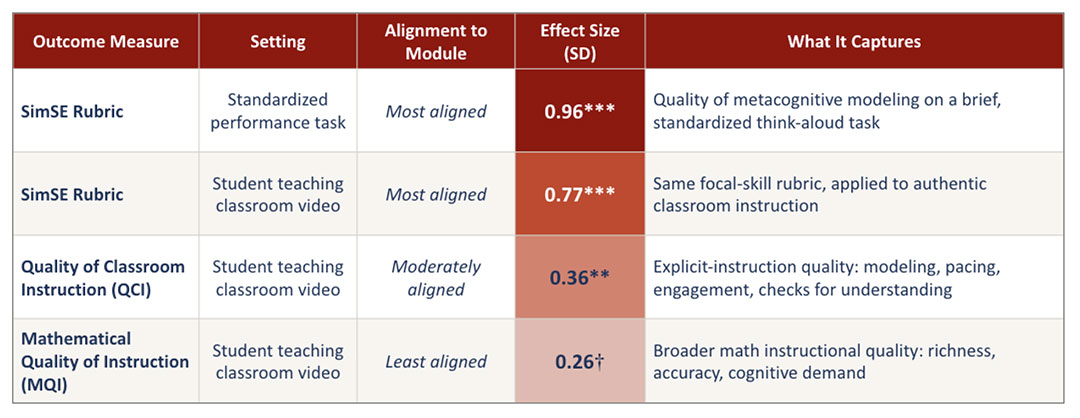

Researchers scored these videos using three observation rubrics that varied in how closely they were aligned to the targeted teaching skill: one tightly aligned rubric (SimSE) focused directly on metacognitive modeling, one moderately aligned rubric (QCI) focused on quality of explicit instruction, and one less aligned rubric (MQI) focused on overall mathematical quality of instruction.

KEY FINDINGS

The practice-based modules outperformed reading-and-reflection modules on all four outcome measures.

- Effect sizes followed the predicted pattern of declining magnitude as outcomes became less conceptually aligned with the intervention content.

- Largest effect on standardized performance tasks (SimSE rubric): 0.96 SD. This represented PSTs' ability to produce structured metacognitive models in a standardized assessment setting.

- Strong effect on classroom metacognitive modeling (SimSE rubric): 0.77 SD. This demonstrated skill transfer from simulated practice into real student teaching classrooms, despite the added complexity of live instruction.

- Moderate effect on Quality of Classroom Instruction (QCI): 0.36 SD. This was a measure of explicit instruction, including modeling, pacing, and student engagement, that was only moderately aligned with the focus skill.

- Smallest effect on Mathematical Quality of Instruction (MQI): 0.26 SD. This was just short of conventional significance thresholds, but consistent with the overall pattern. The MQI captures broader math instructional quality not directly targeted by the modules.

Table 1: The practice-based modules outperformed reading-and-reflection modules on all four outcome measures.

- Teachers in the practice-based module had better scores even on skills they didn’t directly practice. This suggests real instructional growth, not just better performance on a practiced task.

- The practice-based group showed meaningful improvement on rubrics that assess entirely different dimensions of teaching quality than were taught in the practice-based modules.

- 3. The effects of the practice-based modules are almost as large as a year of one-on-one coaching with in-service teachers.

- The average effect of this two-week online module across all three classroom observation outcomes was 0.46 SD. The gold standard benchmark for improving teachers' classroom instruction is sustained instructional coaching- the kind that happens over an entire school year with a dedicated coach observing lessons and giving feedback. A major meta-analysis of that research (Kraft et al., 2018) puts the average effect at 0.49 SD.

- This means that a brief intervention in a teacher prep program for pre-service teachers produced effects that rival those of a multi-month, resource-intensive coaching program.

POLICY AND PRACTICE IMPLICATIONS

- Replacing reading-and-reflection modules in teacher prep programs with practice-based modules that include opportunities to practice skills and receive expert feedback would likely lead to stronger PST performance. The exact simulation-and-coaching model used here is resource-intensive and not straightforwardly scalable, but emerging work on AI-driven sims and coaching offers a more practical path to delivering the same active ingredient at scale.

- Both groups in this study received identical content. They learned the same things about students with disabilities, math word problems, and metacognitive modeling. The only difference was what they were asked to do with that content: one group wrote reflections; the other practiced the skill, got feedback, and tried again. The entire performance gap between the groups is attributable to that single difference.

- For PSTs in shortened pathways, practice-based modules can help develop foundational teaching skills before stepping into a real classroom.

- This study suggests that well-designed modules can meaningfully develop skills even outside a traditional student teaching context. For programs that do include student teaching, these modules can serve as a bridge to help PSTs arrive at their placements with foundational skills already developed, rather than treating student teaching as the only place to develop these skills.

- Module specifications likely need to specify how modules are taught, not just the content coverage standards.

- Many states and programs now require or incentivize completion of online modules on a range of topics (e.g., disability inclusion, dyslexia, culturally responsive teaching) but pay little attention to whether those modules actually develop the skills they target.

- This study demonstrates that how content is taught in a module matters as much as what content is covered.

- AI-driven simulations and coaching may soon make this approach broadly scalable and affordable, but the field needs more research on whether AI versions produce comparable results.

- The authors note that the current implementation requires mixed-reality simulation software, a trained actor to voice student avatars, and skilled coaches, all of which add cost and limit immediate scalability.

- However, the authors' research team has already developed AI-driven versions of both the teaching simulations and the coaching sessions, which substantially reduce per-candidate costs. The next step is rigorous testing of whether these AI-driven experiences produce comparable effects. Fields like medicine and aviation already rely heavily on AI-driven simulation for skill development; education has the same need and should be investing in this research agenda.

FULL WORKING PAPER

This summary is based on the EdWorkingPaper "Practice-Based, Online Modules for Expediting Teacher Skill Development," published April 2026 (EdWorkingPaper No. 26-1460). The full paper can be found at: https://doi.org/10.26300/vp80-mq53.

The EdWorkingPapers Policy & Practice Series is designed to bridge the gap between academic research and real-world decision-making. Each installment summarizes a newly released EdWorkingPaper and highlights the most actionable insights for policymakers and education leaders. This summary was written by Christina Claiborne.